Consumer Protection Tuesday: AI-Powered Smart Contract Auditing at Coinbase

TLDR: Introducing Frosty, an AI-powered smart contract auditing tool that currently outperforms every other tool we evaluated for vulnerability detection. Each run only takes ~1-2 hours to complete, and can be 100x cheaper than a manual audit.

Why It Matters

As AI enables developers to build faster onchain, it is critical that security is able to move as fast — and with the same rigor. At Coinbase, we’re committed to utilizing AI to stay one step ahead of attackers and find new ways to make our onchain products safer. Through our internal experimentation and learnings with leveraging AI for security, we saw that these tools are very capable of finding security vulnerabilities when properly orchestrated. Moreover, to stay at the forefront of onchain security, we knew that adopting AI-powered tooling into our workflows was a must since external threat actors were now also armed with these same powerful models.

In a comprehensive evaluation process that spanned several months, we tested a total of 7 AI-powered tools for smart contract security, including internally built solutions. We are thrilled to share that one of our in-house tools Frosty came out on top in the evaluations.

In smart contract security, the stakes are very high,” said Anmol Malhotra, Senior Director, Security Advisory Services & Blockchain Security at Coinbase. “Unlike web2, a single bug in immutable code can be enough to drain billions — and most teams still don’t have tools that match that level of risk. Frosty gives our web3 developers and BlockSec team high-signal, high-coverage vulnerability detection and triage, so humans can stay focused on the hardest problems.”

Our Evaluation Process

Over the past few months, our Protocol Security team built a robust evaluation framework. This framework captured both quantitative and qualitative characteristics that were important in an AI tool. The most important signals were Precision (signal-to-noise ratio of findings), Recall (coverage of known vulnerabilities), and F1 Score (a harmonic mean balancing the two), computed both overall and specifically for Critical/High/Medium findings - the severity levels that matter most for security outcomes. However, we also factored in other qualitative signals such as the tool’s ability to ingest additional context, the quality of the recommendations provided to fix identified issues, the level of effort needed to run the tool, and whether the tool could integrate with our CI pipeline.

We gathered an evaluation benchmark dataset with 33 real world security audits comprising 434 verified vulnerabilities and ran each tool against a subset of 10–20 repos Not all tools could run every repo — some had service-level constraints that capped run volume, some were discontinued early when intermediate results showed insufficient value to justify completing the full dataset, and others failed on specific repos due to unsupported languages, or size limits. To manage comparison bias from uneven repo coverage, we prioritized running each tool against the same subset of repos, ensuring that performance differences reflect tool quality rather than dataset variance.

Frosty was the clear overall winner for critical/high/medium findings:

Best F1 score - the single most important metric for practical utility. To put this result into perspective, Frosty’s F1 score was 1.5x the second best tool and 3x+ most others.

Highest precision. This is critical for auditor trust and developer adoption, as false-positive fatigue is one of the primary risks when using any security tooling.

How Frosty Works

Frosty didn't come out of nowhere. Our team previously spent months building Base Castle, our first attempt at AI-powered auditing, where we ran dozens of experiments across different architectures and approaches. The results weren't the strongest in our benchmark, but the lessons were invaluable. Armed with these learnings, we built Frosty as a fully autonomous, sequential and multiphased architecture, meaning that each phase only runs after the previous one has been completed, with all phases running without any human intervention.

Rather than explicitly directing how these agents should find vulnerabilities, we have put our development efforts into creating a custom harness and orchestration process that allows the agents to freely reason about the codebase on their own, as well as custom skills to provide high-level guidance to the agents. Furthermore, we have spent significant time ensuring that false positives were addressed and ensuring that the user experience was seamless.

The guiding principle of this multi-phased and multi-agent approach is to avoid context rot – where model performance degrades as the context window fills with stale or irrelevant information from earlier steps. As the audit process is sequential, each phase is performed with an entirely fresh context window. This allows for only the most important context to be shared between each phase’s agents. Along with this approach, we sought to minimize the directly ingested context of the agent itself, by keeping agent prompts minimal and only inheriting skills that will be useful in each phase accordingly.

In addition to managing context, the other core design principle is to allow for the same agents to run multiple times. Although this may sound redundant, it's been shown that each independent run can produce different outputs due to AI being non deterministic. Taking this learning into account, allowing for the agent to run multiple times within each phase (i.e hunt for additional bugs with fresh context windows), we are able to drive true positives (recall) higher.

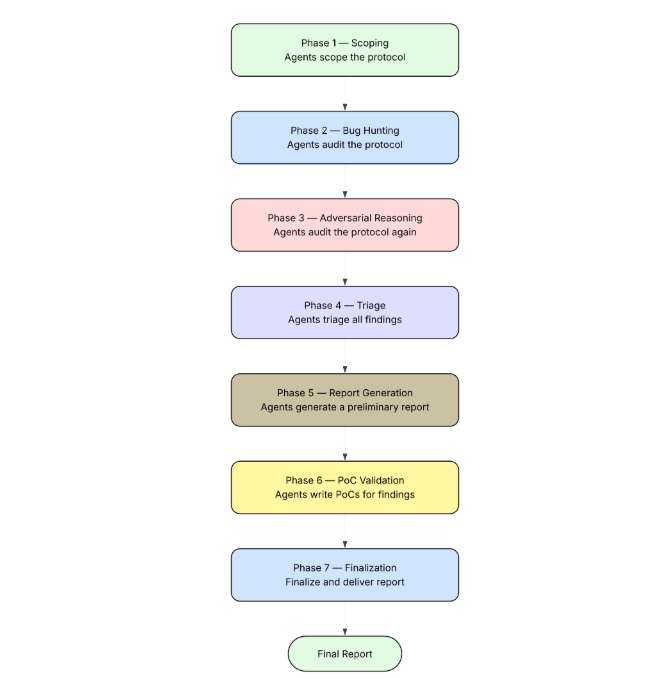

With those 2 principles in mind, at a high level, here is what the multiphased workflow looks like:

Scoping - agents independently scope and threat model the protocol

Vulnerability Hunting - agents independently hunt for bugs

Adversarial Reasoning - agents independently hunt for bugs (again), but from a more adversarial viewpoint

Triaging - agents deduplicate and triage all reported findings

Report Generator - agents generate a preliminary audit report

Proof of Concept Writing - agents generate PoCs to validate findings and reduce false positives

Report Cleanup and Finalization - after PoCs are written, validated / invalidated findings are kept in the report or adjusted in severity.

This workflow is entirely autonomous and can be completed in 1-2 hours, with the output being a formatted audit report in a markdown file, ready to be viewed by our in-house security team or smart contract developers. We use two skills from Trail of Bits, in the first and third phases of the workflow.

The Impact

Through our internal usage of Frosty, we have observed some measurable impacts that have already been helpful in augmenting our security review process:

Enhanced focus on high priority areas. By quickly catching surface level bugs across large codebases, this has enabled our security engineers to spend more time digging deeper into more complex code and interactions.

More rigorous reviews. All smart contracts at Coinbase already go through multiple rounds of audits before deployment. Running Frosty is yet another round of reviews all smart contracts now go through. In some instances, Frosty is able to catch high/critical vulnerabilities before our security engineers do.

Surfacing blind spots. Although AI may not be able to surface 100% of bugs in a smart contract, it does offer some different perspectives and highlights threats that human auditors may not consider. Even if they’re low impact observations individually, it allows our security engineers to chain together multiple concerns and interactions, which may lead to higher impact bugs.

Overall Frosty has greatly augmented our security review process, and our team will continue to improve upon the tool through our own development.

What’s Next

Currently, Frosty is available internally and is being run on every smart contract built within Coinbase before deployment. However, in the near future, we are looking forward to the tool becoming available to external developers.

Disclaimer: Frosty today does not replace the traditional human-led smart contract auditing process. It can still miss deep and complex vulnerabilities which human auditors with more context and experience may be able to catch better.