Optimizing the Rule Creation Process for Fraud Prevention

TL;DR: At Coinbase, ML models handle long-term fraud defense while rules let us respond fast to active attacks. We rebuilt the backtesting data layer, automated schema evolution, and gave analysts a standardized notebook workflow backed by ML libraries. Backtesting is now faster, and analysts can go from spotting a fraud pattern to shipping a new rule in hours, not days.

A common debate when building a system to fight fraud revolves around Models vs. Rules. Many companies treat this as an either-or decision, choosing between sophisticated machine learning (ML) models or agile, rule-based systems. At Coinbase, we believe effective risk management requires both.

Our approach is straightforward: Models for the marathon, Rules for the sprint. Machine learning models serve as our strategic, long term defense, acting as the primary decision makers for complex patterns. Rules serve as our tactical lever for immediate intervention, acting as the emergency brake for active attacks.

To maximize the effectiveness of this approach, we are creating a unified framework where models and rules work in harmony, continuously reinforcing each other. This creates a powerful feedback loop: tactical rules act as scouts, identifying emerging threats that feed into our models for training. This ensures that today’s fixes evolve into tomorrow’s robust, sophisticated defenses.

In this post, we’ll share how we enhanced our rule authoring process by integrating machine learning libraries. This integration replaces manual rule creation by Risk Analysts with automated recommendations for optimal parameters and thresholds, significantly improving response times. By streamlining this workflow, we ensure that every rapid intervention is guided by the same data-driven precision as our core models, enabling faster and more confident mitigation.

Challenge

The success of our "marathon and sprint" approach depends on one crucial factor: the "sprint" has to be fast. Our rapid-response rules need to be deployed quickly and reliably. Unfortunately, this is exactly where our system required process and tooling improvements to meet the intended speed.

While our strategy was sound, the workflow was slower than intended, reducing responsiveness to emerging patterns. Since fraudsters exploit vulnerabilities until they are closed, every minute counts.

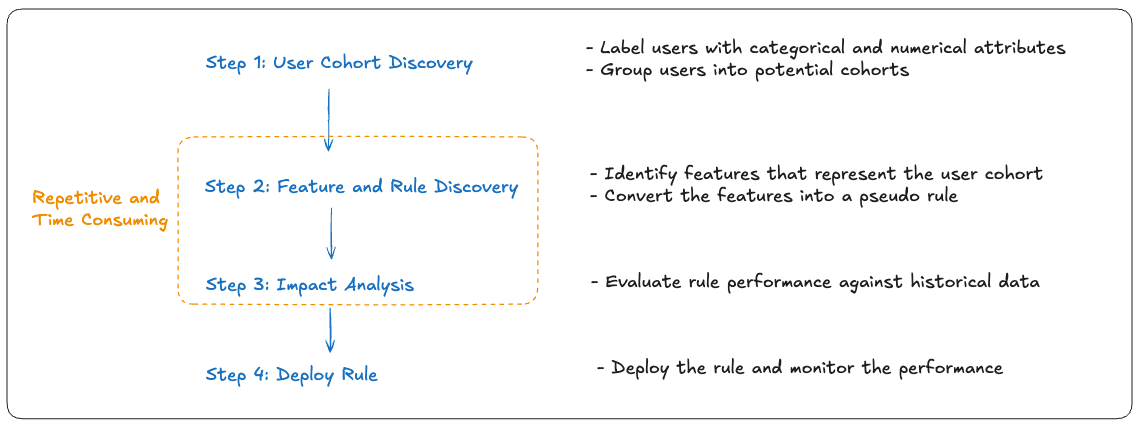

Rule Creation Workflow

We gathered feedback on the rule creation process and the analysis revealed three main areas for improvement:

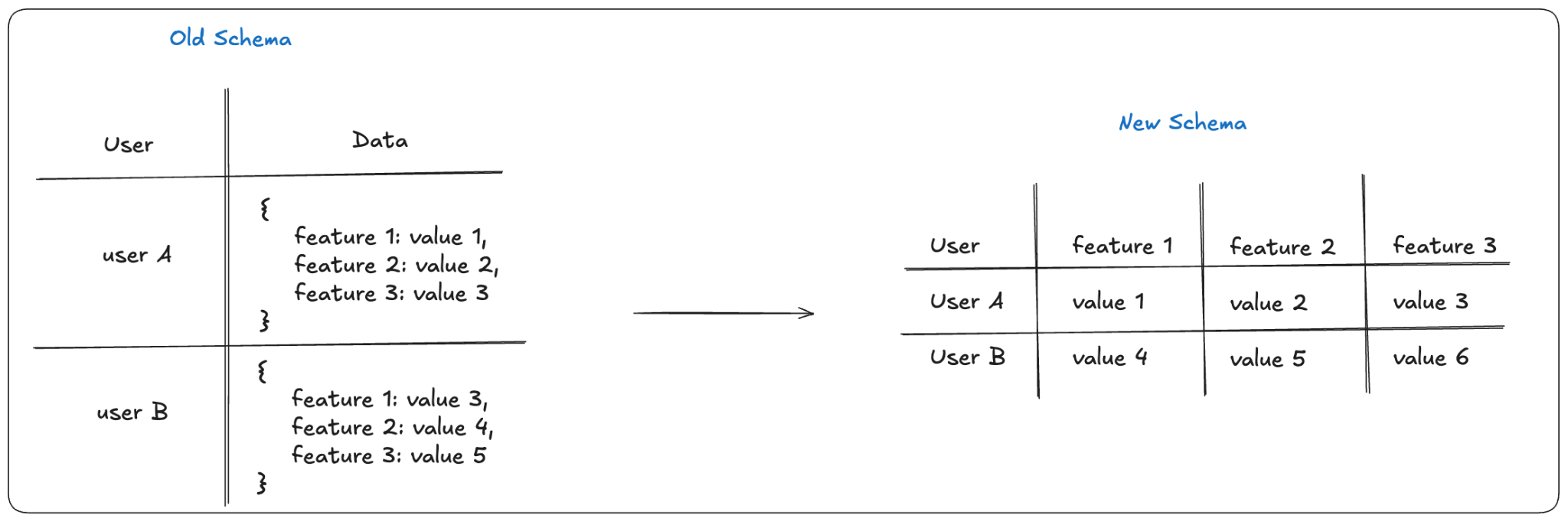

Inefficient Rule Backtesting: Rule backtesting was limited by a legacy, nested schema that stored many features in a single JSON column, making lookups and parsing inefficient at scale.

Challenging Schema Maintenance: As features are frequently added or removed based on fraud patterns, any data modelling improvements that we made to the backtesting data had to be flexible enough to handle addition/removal of features.

Lack of Standardized Assessment: While analyst teams had internal methods for evaluating a rule's effectiveness against past data, different teams were using different variations of impact assessment. This fragmentation meant teams couldn't easily build upon each other's learnings or reuse proven assessment approaches. This also introduced variability that depended on individual authoring approaches.

In short, the tools and data foundation needed to support the “sprint” side of our approach required enhancements to enable timely, reliable responses.

Solution

After identifying the bottlenecks in our analyst workflow, we identified 3 different improvements that would enhance the quality of our rules while solving all the problems listed above.

Improvement 1: Rebuilding the Data Foundation

Our first major hurdle was data access patterns that didn’t match our backtesting needs. A legacy JSON-heavy structure hindered efficient lookups and parsing at scale, reducing iteration speed.

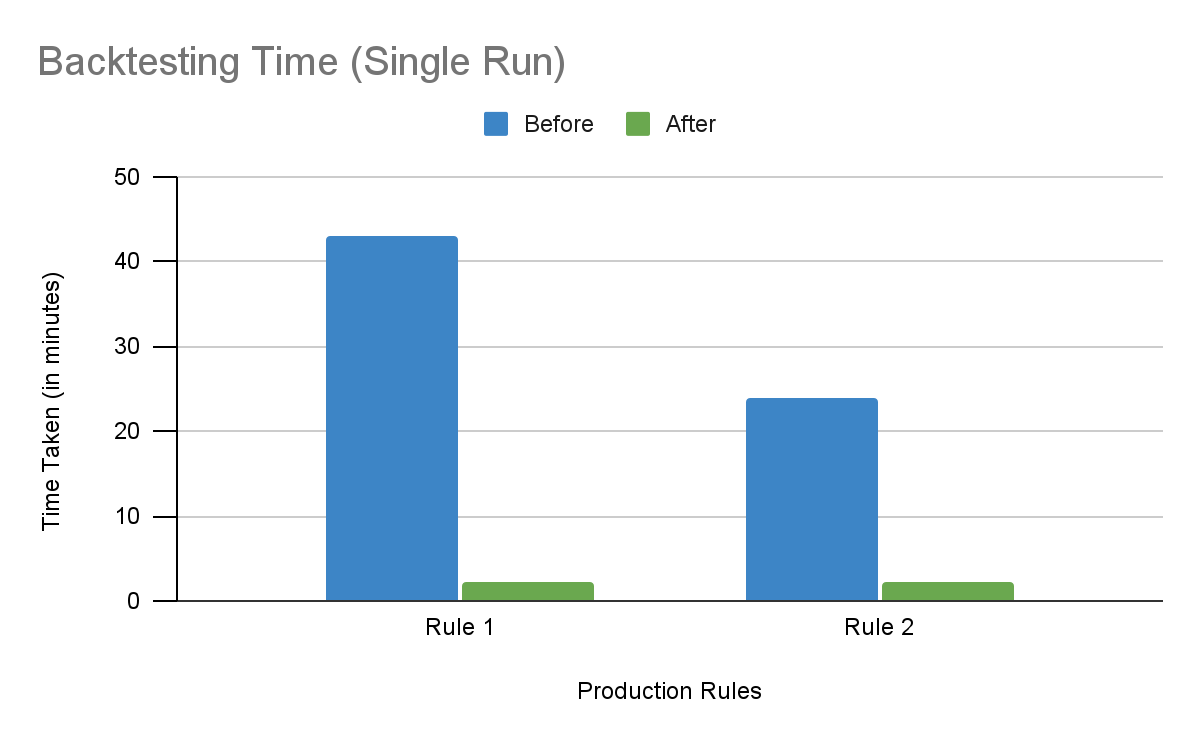

We replaced the legacy schema with a flattened, columnar design that organizes the data needed for rule authoring and backtesting. Removing expensive parsing and enabling techniques like partitioning, clustering, and indexing significantly improved turnaround times (e.g., backtesting latency improved by over 10x).

This change in schema reduced the backtesting time by an order of magnitude.

Improvement 2: Automating Schema Evolution

While flattening improved performance, it introduced schema evolution challenges. Every time analysts added a new feature (e.g., categorical attributes, numeric scores, or flags), which happens often due to new fraud patterns, we needed a safe, automated way to evolve the schema.

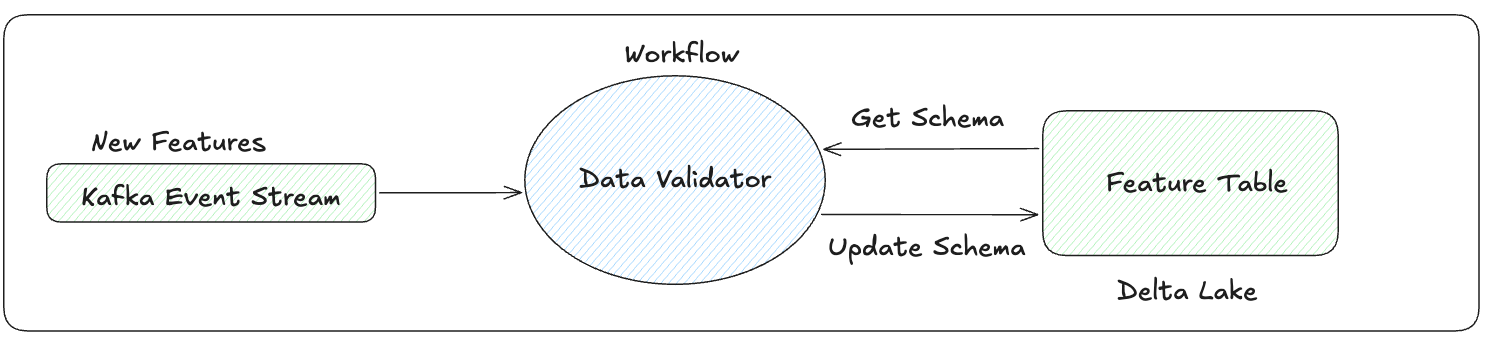

To fix this, we created an automated workflow that:

Inspects incoming feature data using schema inference to detect new fields at ingestion time.

Generates migration scripts with appropriate column types (e.g., FLOAT for numeric scores, VARCHAR for categorical features, BOOLEAN for flags).

Applies schema changes to the feature table.

Maintains backward compatibility by making all new columns nullable and preserving existing data.

The workflow is triggered whenever new features are detected during ingestion, thereby removing the need to manually maintain the schema.

Improvement 3: Integrated Notebook-Driven Workflow

With core data problems solved, our next focus was the rule authoring experience. We built a tooling library that analysts could use for rule exploration. This library was integrated with a notebook based workflow that had various steps an analyst would follow for rule creation. It starts with fraudulent transaction selection, followed by rule recommendation using machine learning/statistical methods and ends with evaluation of the rule against historical data. This notebook provides structure to the analysis with simple interfaces for identifying features and backtesting the rules. Analysts engage with clean, purpose-built Python functions that map directly to their analytical workflows.

Under the hood:

The library orchestrates a distributed computation across our compute cluster.

It leverages optimized frameworks like for advanced rule validation and recommendation.

It handles all the boilerplate: optimized query generation, robust error handling, and standardized output formatting.

Through the tooling library, every analyst uses the same workflow and gets best practices by default. Every time an analyst uses the library, they are automatically running optimized, battle-tested code.

The Impact & Next Steps

By shifting to an ML-powered rule creation workflow, we haven't just made things faster; we’ve fundamentally changed how our Risk team operates.

Drastically Reduced Response Time: What used to take days of manual data wrangling and schema updates now happens in hours. Analysts can identify a new fraud pattern and deploy a statistically optimized countermeasure in the same afternoon, significantly narrowing the window of exposure during active attacks.

Higher Precision, Fewer False Positives: By using ML libraries to suggest rule parameters, we’ve moved away from "gut-feel" thresholds. This data-driven precision helps us catch more bad actors while reducing the friction for legitimate users—a critical balance for our growth.

Engineering Efficiency: Automating schema management and backtesting has freed our engineering team from reactive "plumbing" work. They can now focus on infrastructure and long-term model architecture, while analysts are empowered to self-serve their data needs without engineering bottlenecks.

Next Steps: Towards Autonomous Risk Management

While we have successfully streamlined the "sprint," our vision is to further automate the ecosystem so that the system works for us.

Auto-Sprinting (Event-Driven Rules): We are exploring systems that trigger automated rule suggestions the moment a new fraud cluster is detected. By moving to an event-driven architecture, we aim to have the system propose a draft rule before an analyst even opens their dashboard.

One-Click "Promotion" to Models: We plan to tighten the integration with our Feature Store. The goal is a seamless "one-click" pathway where a successful, high-performing rule can be promoted into a permanent model feature, automating the transition from a temporary fix to a permanent defense.

Scaling Beyond Fraud: This notebook-driven framework is domain-agnostic. We are actively working to scale this pattern to other high-stakes risk areas, such as Account Takeovers (ATO) and Compliance, where the need for rapid, audit-proof decision-making is just as critical.

What took days now takes hours. We flattened our backtesting data, automated schema changes, and built a shared notebook workflow so analysts can write better rules, faster. Next, we're closing the loop by feeding rule signals back into our models so temporary fixes become permanent defenses.