Accelerating CX Agent Ramp-Up with an AI-Powered Case Grading Assistant

TL;DR: We built an AI-powered Case Grading Assistant that reduces trainee case review time from ~90 minutes to ~20 minutes –- while maintaining human oversight and full auditability. The system uses comparison-based grading (evaluating agent work against known-good reference cases) via LLMs, minimizing hallucination risk and improving consistency at scale. Key insight – anchoring evaluations to explicit reference cases and rubrics enabled faster reviews while supporting more consistently calibrated outcomes.

We've built high-throughput systems and real-time fraud detection for Coinbase Customer Experience (CX) that scale globally. Yet until recently, training case reviews were still done manually for 90 minutes each.

Scaling operations at Coinbase isn’t just about moving faster - it’s about doing so without compromising quality, consistency, or regulatory trust.

One of the most time-intensive bottlenecks in our compliance operations is agent training and ramp-up, particularly during the training phase (the supervised training period where new agents complete practice cases before handling live customer issues). Senior Quality Control (QC) analysts manually review agent case investigations against detailed rubrics - a process that can take up to 90 minutes per case and pulls experienced reviewers away from live production queues.

We set out to rethink this workflow.

Our newly built AI-powered Case Grading Assistant automates first-pass reviews while keeping humans firmly in control.

The Scaling Problem with Manual Reviews

As agent onboarding scaled to meet case demand, the traditional training model exposed three core challenges:

Operational drag - Senior QC resources were diverted from working production cases

Consistency at scale - Even with standardized onboarding reviews, maintaining perfectly consistent rubric application across reviewers and cohorts required significant manual effort

Slower ramp-up - Agents waited days for feedback, delaying readiness

We needed a solution that could scale review capacity, standardize feedback, and support consistent application of existing QC rubrics - with human validation and full auditability.

Designing AI as an Assistant, Not an Arbiter

From day one, we made a deliberate architectural choice: the AI-powered Case Grading Assistant augments reviewers rather than replacing human judgment.

The Case Grading Assistant performs a first-pass review:

Evaluating cases against a structured QC rubric

Flagging missed signals, inconsistencies, and gaps

Producing clear, reviewable scoring and feedback

A human reviewer always validates the final outcome. This human-in-the-loop model wasn’t a compromise - it was a prerequisite for trust.

How Case Grading Works

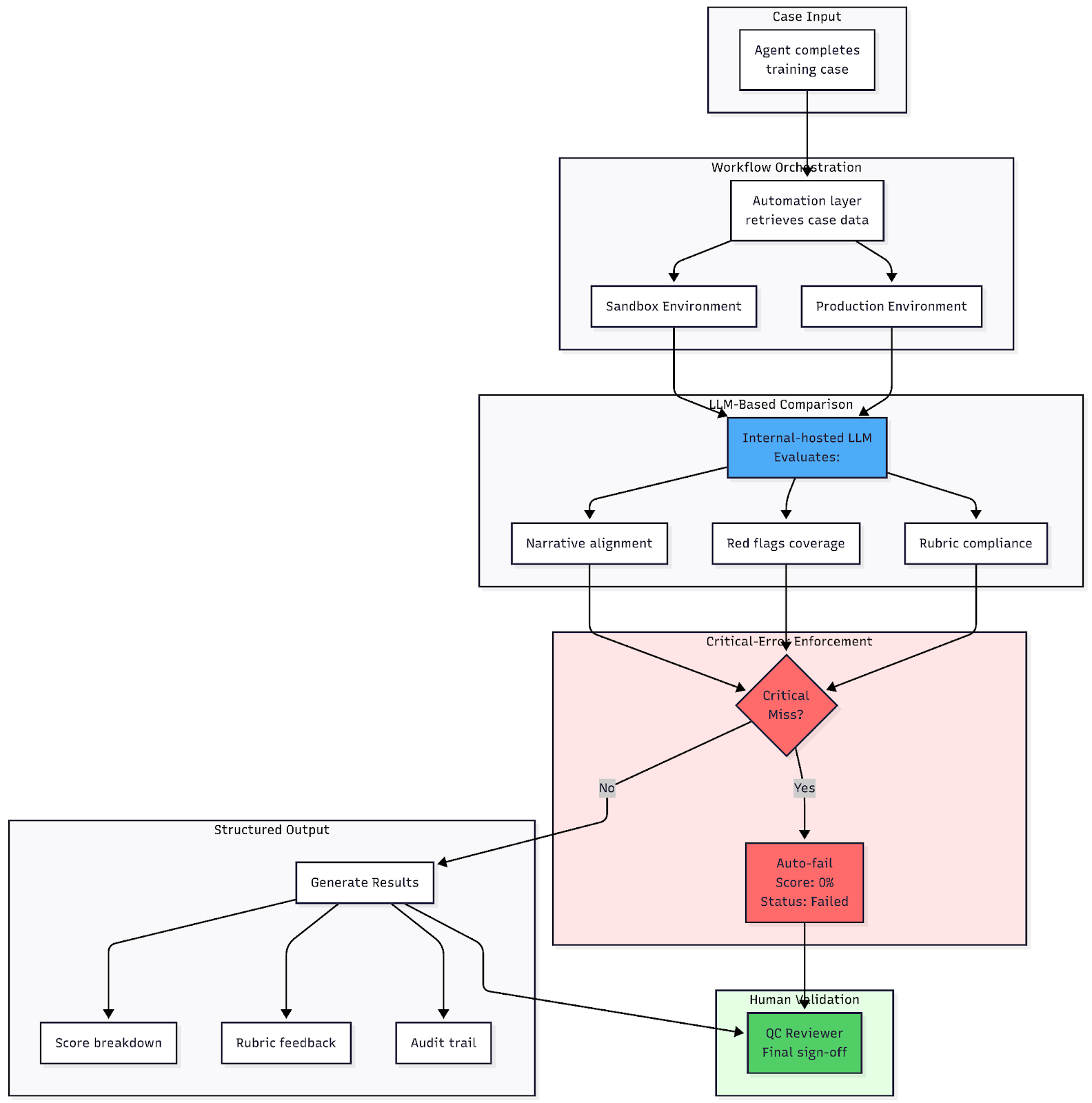

At a high level, the AI-powered Case Grading Assistant automates grading through a simple but robust pipeline:

1. Case input

As part of the training process, agents complete a fixed set of training cases that correspond to known-good reference cases in production. These case pairs form the basis for structured evaluation and are submitted to the Case Grading Assistant for review.

2. Workflow orchestration

An automation layer retrieves case data from the Sandbox and Production environments and constructs a structured grading request.

3. LLM-based comparison

Coinbase's internally hosted LLM Gateway evaluates:

Narrative alignment with the reference case.

Coverage of red flags and escalation logic.

Compliance with QA rubric criteria.

4. Critical-error enforcement

If a critical compliance miss is detected (for example, missed red flags or improper escalation), the case is automatically scored 0% with a Failed result, regardless of other metrics.

5. Structured output

Scores, rubric breakdowns, and feedback are returned for rapid human review and sign-off.

6. Human Validation (Non-negotiable)

Every grading decision is logged, traceable, and reviewable - then validated by a QC reviewer before finalization. This human-in-the-loop validation is a foundational requirement for regulated workflows.

The AI Was More Consistent Than Humans

Early in deployment, we expected to spend most of our time debugging AI errors. Instead, we discovered something unexpected.

During testing, we frequently discovered cases where the AI’s grading differed from human reviewers. In more than a few instances, we spent hours digging into discrepancies - initially assuming the model had made a mistake - only to realize that the AI had applied the rubric more consistently than its human counterpart.

This pattern repeated across multiple cohorts. The AI consistently caught:

Missed escalation triggers that reviewers had mentally downgraded.

Incomplete documentation.

Rubric criteria that had been informally reinterpreted over time.

That experience reshaped our thinking. While we initially set out to improve review throughput, comparison-based grading helped us improve speed, quality, and consistency. In pilot cohorts, the AI-powered Case Grading Assistant achieved over 95% agreement with senior reviewers and demonstrated a 100% detection rate for critical errors. By anchoring evaluations to explicit reference cases and rubric criteria, the system reduced subjective variance and made gaps easier to spot - for humans and AI alike.

The lesson wasn't just about automation. It was about using AI to hold ourselves accountable to our own standards.

Why Comparison-Based Grading Works

Rather than asking the model to grade cases from scratch, we designed the system to compare agent output against a known-good reference case.

This approach delivered several key advantages:

Reduced hallucination risk - The model evaluates relative to known-good examples rather than generating arbitrary standards.

More deterministic outcomes - Comparison yields consistent, explainable results that reviewers can validate.

Built-in ground truth - Reference cases serve as both training data and evaluation baseline.

Direct alignment with existing standards - Leverages the rubrics QC teams already trust.

Technical Deep Dive

For readers interested in the implementation details:

Model Selection

We evaluated several models available through Coinbase's LLM Gateway and selected one that balanced:

Cost efficiency for high-volume evaluation (hundreds of cases per cohort).

Latency requirements (sub-30-second grading for near-real-time feedback).

Data residency and compliance requirements (all case data remains within Coinbase-controlled infrastructure).

Prompt Engineering

Our prompts follow a structured format:

System context establishing the QC rubric and scoring criteria.

Reference case as the evaluation baseline.

Agent case to be evaluated.

Explicit instructions to compare specific rubric dimensions.

Required output schema (JSON with scores, justifications, and flags).

Evaluation Metrics

In internal pilots across multiple onboarding cohorts, we tracked several metrics to monitor AI performance:

Agreement rate - Percentage of AI grades that match human reviewer validation.

False positive rate - Cases flagged as failures by the system that human reviewers. ultimately determined met the required standards

False negative rate - Critical misses the AI didn't catch.

Grading consistency - Standard deviation of scores across similar cases.

Engineering for Trust (Not Just Accuracy)

Building AI for compliance workflows required going beyond raw model performance. We designed the system to support:

Determinism - Rubric-driven prompts and constrained outputs reduce variability.

Auditability - Every score and decision is logged for compliance review.

Fail-safe defaults - Critical errors override all other scoring, mirroring conservative human review standards.

Operational simplicity - The interface remained intentionally lightweight to avoid tool fatigue during onboarding.

The Impact

In internal pilots across multiple onboarding cohorts, the AI-powered Case Grading Assistant demonstrated measurable improvements:

Significant time reduction - First-pass review time dropped from approximately 90 minutes to roughly 20 minutes per case in observed pilots.

On-time learner progression - Near-real-time feedback reduced QC review delays and helped onboarding cohorts progress through training on schedule.

Improved consistency - Standardized rubric enforcement across cohorts showed reduced scoring variance.

Enhanced focus - Senior reviewers gained capacity to prioritize high-risk, live production work.

From early proof-of-concept testing through full production rollout across multiple onboarding cohorts, this translated into hundreds of hours saved and significant cost avoidance. Just as importantly, grounding feedback in real case investigations - rather than abstract training scenarios - allows new analysts to receive more practical, context-rich guidance earlier in the onboarding process.

What We Learned

This work reinforced several principles we now apply broadly to enterprise AI:

AI Should Amplify Experts

Early in the project, we considered full automation. But we quickly realized the nuance compliance work requires. That realization shaped our human-in-the-loop architecture, and the result is a tool that makes experts more effective, not obsolete. The AI handles the repetitive rubric application; humans bring judgment, context, and accountability.

Explicit, High-Code Workflows Outperform Opaque Automation

We deliberately avoided "black box" solutions. Every AI decision is traceable, logged, and tied to specific rubric criteria. This transparency wasn't just for compliance - it built trust with the QC team, who could see exactly why the AI scored a case a certain way. When reviewers can audit the AI's reasoning, they're more likely to trust and adopt it.

Comparison Beats Free-Form Generation in Regulated Domains

Asking an LLM to grade cases from scratch introduces too much variability in high-stakes environments. Anchoring to reference cases constrains the model's output space, reduces hallucination risk, and produces more consistent, explainable results. In regulated workflows, determinism isn't optional - it's foundational.

Trust Is Earned Through Observability, Not Claims

We didn't launch with promises about "AI accuracy." We launched with metrics, audit logs, and side-by-side human validation. Trust came from demonstrating reliability over time - showing QC analysts that the system caught what they caught (and sometimes more), with full transparency into how it reached those conclusions.

What’s Next

The AI-powered Case Grading Assistant represents an evolving approach to scaling quality operations. Areas of potential future exploration include:

Deeper integration with QA platforms.

Expanded rubric support across additional workflows.

Enhanced feedback analytics for targeted training.

Personalized learning insights, Using grading signals to identify proficiency gaps and guide tailored feedback and learning paths.

UI-based workflows beyond spreadsheets interfaces.

Each step follows the same principle: scale responsibly, with humans in the loop.

Closing Thoughts

AI can unlock enormous efficiency in enterprise operations - but only when it’s engineered with the same rigor as any mission-critical system.

By treating AI systems as software, embedding governance from day one, and designing explicitly for transparency and human oversight, the AI-powered Case Grading Assistant demonstrates how automation can scale responsibly in regulated environments.

As organizations explore AI across operational workflows, the lesson is simple: trust isn’t achieved through model performance alone - it’s built through observability, clear workflows, and humans remaining firmly in the loop.

To learn more about careers at Coinbase, visit www.coinbase.com/careers.To learn more about crypto and Coinbase, visit www.coinbase.com